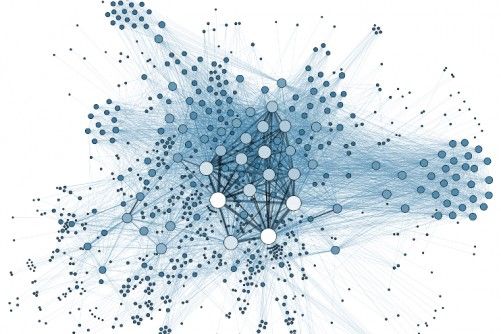

Misinformation can spread through a social network like a disease, while strongly held beliefs can prevent facts and evidence from spreading and taking hold. Courtesy of Martin Grandjean

Tufts University researchers have come up with a computer model that remarkably mirrors the way misinformation spreads in real life. The work might provide insight on how to protect people from the current contagion of misinformation that threatens public health and the health of democracy, the researchers say.

“Our society has been grappling with widespread beliefs in conspiracies, increasing political polarization, and distrust in scientific findings,” said Nicholas Rabb, a PhD computer science student at Tufts School of Engineering and lead author of the study, which was published January 7 in the journal Public Library of Science ONE. “This model could help us get a handle on how misinformation and conspiracy theories are spread, to help come up with strategies to counter them.”

Scientists who study the dissemination of information often take a page from epidemiologists, modeling the spread of false beliefs on how a disease spreads through a social network. Most of those models, however, treat the people in the networks as all equally taking in any new belief passed on to them by contacts.

The Tufts researchers instead based their model on the notion that our pre-existing beliefs can strongly influence whether we accept new information. Many people reject factual information supported by evidence if it takes them too far from what they already believe. Health-care workers have commented on the strength of this effect, observing that some patients dying from COVID cling to the belief that COVID does not exist.

To account for this in their model, the researchers assigned a “belief” to each individual in the artificial social network. To do this, the researchers represented beliefs of the individuals in the computer model by a number from 0 to 6, with 0 representing strong disbelief and 6 representing strong belief. The numbers could represent the spectrum of beliefs on any issue.

For example, one might think of the number 0 representing the strong disbelief that COVID vaccines help and are safe, while the number 6 might be the strong belief that COVID vaccines are in fact safe and effective.

The model then creates an extensive network of virtual individuals, as well as virtual institutional sources that originate much of the information that cascades through the network. In real life those could be news media, churches, governments, and social media influencers—basically the super-spreaders of information.

The model starts with an institutional source injecting the information into the network. If an individual receives information that is close to their beliefs—for example, a 5 compared to their current 6—they have a higher probability of updating that belief to a 5. If the incoming information differs greatly from their current beliefs—say a 2 compared to a 6—they will likely reject it completely and hold on to their 6 level belief.

Other factors, such as the proportion of their contacts that send them the information (basically, peer pressure) or the level of trust in the source, can influence how individuals update their beliefs. A population-wide network model of these interactions then provides an active view of the propagation and staying power of misinformation.

Future improvements to the model will take into account new knowledge from both network science and psychology, as well as a comparison of the results from the model with real world opinion surveys and network structures over time.

While the current model suggests that beliefs can change only incrementally, other scenarios could be modeled that cause a larger shift in beliefs—for example, a jump from 3 to 6 that could occur when a dramatic event happens to an influencer and they plead with their followers to change their minds.

Over time, the computer model can become more complex to accurately reflect what is happening on the ground, say the researchers, who in addition to Rabb include his faculty advisor Lenore Cowen, a professor of computer science; computer scientist Matthias Scheutz; and J.P deRuiter, a professor of both psychology and computer science.

“It’s becoming all too clear that simply broadcasting factual information may not be enough to make an impact on public mindset, particularly among those who are locked into a belief system that is not fact-based.” said Cowen. “Our initial effort to incorporate that insight into our models of the mechanics of misinformation spread in society may teach us how to bring the public conversation back to facts and evidence.”

Source: Tufts University