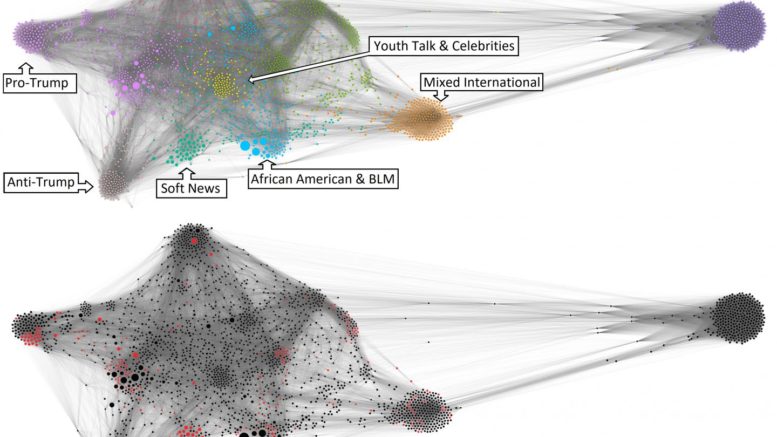

Note: BLM = Black Lives Matter; IRA = Internet Research Agency. Fig. 2a (top) and 2b (bottom): Nodes (circles) represent the various Internet Research Agency accounts. Edges (lines) represent topical similarity between accounts. Size of nodes indicates accounts' reach, in terms of retweets. Color in Figure 2a indicates the different personas (the topical type of account). Color in Figure 2b indicates discussion of vaccines by the account (red indicates that the account mentioned vaccines at least once between 2015 and 2017; black indicates no vaccine mentions). Courtesy of D. Walter

This study encompassed more than 2.8 million tweets published by 2,689 accounts operated by the Russian Internet Research Agency (IRA) from 2015-17. Researchers identified nine types of troll personas, from fake Black Lives Matters activists to fake boosters of Donald Trump, and examined the extent to which those persona types discussed vaccination, and how they did so.

The analysis was conducted by researchers at the Annenberg Public Policy Center (APPC) of the University of Pennsylvania, Georgia State University, and the University at Buffalo, SUNY. The study, "Russian Twitter Accounts and the Partisan Polarization of Vaccine Discourse, 2015-2017," was published in March in the American Journal of Public Health.

"We demonstrate how IRA accounts discussed vaccines not only to sow discord among people of the United States but also to flesh out the personalities of their 'American' accounts in a credible way," the researchers wrote.

Although the vaccination tweets made up a small portion of the messaging from these accounts over the three years, the trolls used pro- and anti-vaccination tweets to help establish realistic-seeming partisan identities. By politicizing vaccination, the Russian trolls could potentially affect attitudes, promote vaccination hesitancy, and magnify health disparities, the researchers said.

"Russian trolls worked to polarize Americans on a health topic that is not supposed to be political," said co-author Yotam Ophir, an assistant professor of communication at the University at Buffalo and a former postdoctoral fellow at APPC. "As our nation deals with the coronavirus pandemic, that type of politicization poisons the well of crisis communications for COVID-19 in ways that create tensions, mistrust and, potentially, a lack of intention to comply with government orders and health directives."

Ophir co-authored the paper with Dror Walter, of Georgia State University, and Kathleen Hall Jamieson, director of the Annenberg Public Policy Center, which supported the research.

The researchers expanded on past studies of Russian attempts to sow discord during the election and IRA tweets on vaccination. The current work included the full set of IRA Twitter activity over the three years, and ultimately included the analysis of 2.8 million tweets. Among those tweets, the researchers identified 1,968 that discussed vaccination.

"We first used unsupervised machine learning to map the various topics IRA accounts were talking about," said Walter, the lead author and an assistant professor of communication at Georgia State University. "We used network analysis to group together accounts that tended to discuss the same topics and used the same language. With this method we were able to identify nine different groups of users, which we call 'thematic personas.' We then analyzed computationally and manually how each group discussed the issue of vaccines."

Among those personas, for instance, were one "thematic community" focused on tweeting links to hard news updates and one focused on soft news; one that was clearly pro-Trump, and one clearly anti-Trump; one that specialized in youth talk and celebrities, one that imitated African American users in topics (Black Lives Matter activism) and language, and one that focused on Ukraine. Others focused on international topics, and retweets and trendy "hashtag games."

The researchers found striking differences in the ways different personas talked about vaccines, with the biggest differences falling across political lines. They said that the trolls attempted to cater to audiences of different political inclinations with targeted messages based on their perceived opinions about vaccines. Simply put, pro-Trump personas and African American personas were much more likely to express anti-vaccine sentiment than the anti-Trump, liberal personas.

Specifically, of the accounts using the pro-Trump persona, 17% mentioned vaccines at least once and more than half of those tweets were anti-vaccination. Among the accounts adopting the liberal, anti-Trump persona, only 2% mentioned vaccines; about half of those tweets were neutral on vaccination and over a third were pro-vaccine.

About 11% of the accounts imitating African American users mentioned vaccines. While the tweets mentioning vaccines made up a very small percentage of the total from these accounts, the sentiment among tweets that did discuss it was balanced, slightly more negative than positive.

"As COVID-19 spreads disease and death across the globe and scientists race to develop treatments and a vaccine against it, I expect the Russian discourse saboteurs to resurrect the behaviors we isolated in this study," said Jamieson, the author of "Cyberwar: How Russian Hackers and Trolls Helped Elect a President," which was published in 2018 by Oxford University Press and will be released in an updated paperback edition in 2020.

The researchers concluded: "Even if small in magnitude, the intentional Russian spread of antivaccine discourse targeted at specific subpopulations that are susceptible to it (i.e., pro-Trump users and African Americans on Twitter) could be the beginning of a new front in the ongoing informational cyberwar."

Source: Annenberg Public Policy Center

Be the first to comment on "Russian Trolls on Twitter Polarized Vaccination During 2016 Election Cycle"